Two-way Gaussian Mixture Models for High Dimensional.

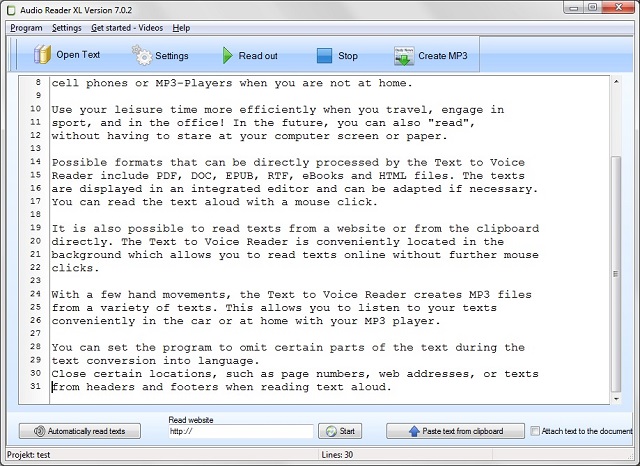

Classifying with Gaussian Mixtures and Clusters 683 et al 1977, Nowlan 1991) to determine the parameters for the Gaussian mixture density for each class. 3 Winner-take-all approximations to G MB classifiers In this section, we derive winner-take-all (WTA) approximations to GMB classifiers.Structure General mixture model. A typical finite-dimensional mixture model is a hierarchical model consisting of the following components:. N random variables that are observed, each distributed according to a mixture of K components, with the components belonging to the same parametric family of distributions (e.g., all normal, all Zipfian, etc.) but with different parameters.Here, we study the two-way mixture of Gaussians for continuous data and derive its estimation method. The issue of missing data that tends to arise when the di-mension is extremely high is addressed. Experiments are conducted on several real data sets with moderate to very high dimensions. A dimension reduction property of the two-way mixture of distributions from any exponential family is.

GMM is not a classifier, but generative model. You can use it to a classification problem by applying Bayes theorem. It's not true that classification based on GMM works only for trivial problems. However it's based on mixture of Gauss components, so fits the best problems with high level features. Your code incorrectly use GMM as classifier.The Gaussian Mixture Model Classifier (GMM) is basic but useful classification algorithm that can be used to classify an N-dimensional signal. In this example we create an instance of a GMM classifier and then train the algorithm using some pre-recorded training data. The trained GMM algorithm is then used to predict the class label of some.

Second, we show an exact expression for the convergence of our variant of the 2-means algorithm, when the input is a very large number of samples from a mixture of spherical Gaussians. Our analysis does not require any lower bound on the separation between the mixture components.